For those of you who are using an ADSL modem or any kind of router behind an IPCop, it can sometimes become an annoying process to access the router’s web gui so that you can view connection statistics, etc. Usually you need to unplug the router and then hook it up directly to your local LAN, etc. Whatever you need to do, it can be annoying.

I have come up with a way around that. It just involves using some nifty tricks via SSH, and I will show you how to configure your system with minimal fuss in order to get to your router’s web gui. It’s easy to modify so you can access any other port too.

You will need:

- A copy of PuTTY [http://www.chiark.greenend.org.uk/~sgtatham/putty/]

- A Web Browser

Firstly, you need to enable one or two options in your IPCop’s web gui. Access the IPCop web gui using your browser, and select System -> SSH Access.

Login when prompted using your admin user.

If you haven’t already, enable ‘SSH Access’, and in particular, enable ‘Allow TCP Forwarding’. You should have at least ‘Allow password based authentication’ as well.

Note that you access SSH on port 222.

Save the settings, and you can close your session.

If you’re not using an IPCop, just ensure that you can access your firewall via ssh.

Now comes the “hard” part (which is actually pretty easy as you will see).

Extract the putty archive somewhere useful, and create a shortcut to the putty.exe somewhere convenient. I usually create the shortcut on my Quick Launch toolbar. Or you can just run the exe directly. It’s entirely up to you. I’m not going to tell you how to manage your software. 😉

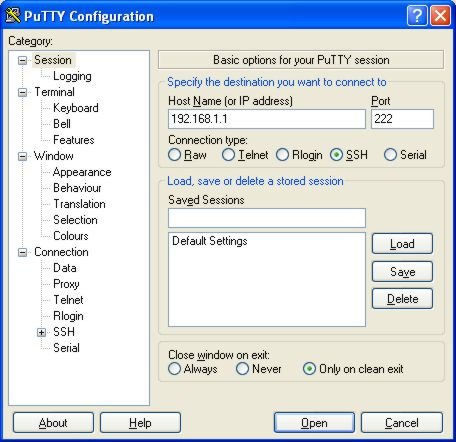

Run putty, and you will be presented with a confusing interface. The basic idea

is that you type in the IP or hostname of the machine you want to connect to,

select the type of connection, and hit enter, which launches the connection.

In our case, we are going to be creating a couple of saved sessions, so that all we will need to do is to double click the entry in the saved sessions list, and the connection will be established, or the command will be executed.

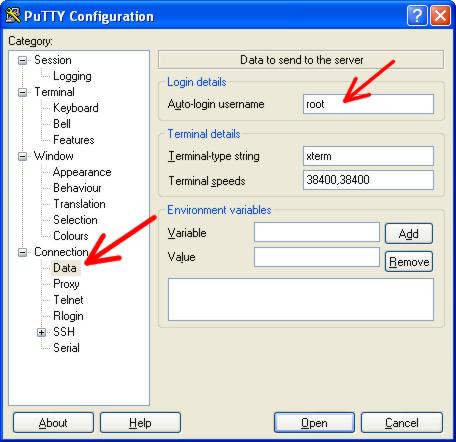

Now since we will ALWAYS be logging in to the firewall as user ‘root’, we can set the username so that we don’t always have to type it. This step is optional.

In the category list, expand ‘Connection’, and select ‘Data’. In the very top

field, under ‘Auto-login username’ enter ‘root’ (without the quotes).

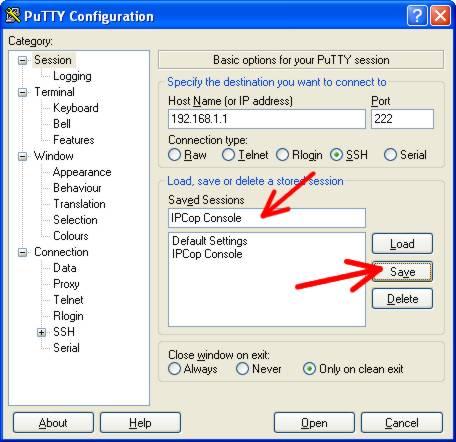

Select ‘Session’ at the top of the category list to return to the session

configuration page.In the ‘Saved Sessions’ field, give your session a name. Use

‘IPCop Console’ if it will make you feel better, and then click the ‘Save’

button. You now have a saved session.

Since the session is already technically loaded, we can click the ‘Open’ button at the bottom of the window to launch the session, or you...

]]>